AI systems do not stay still after release. The model may be the same file on disk, but the data around it, user behavior, and the way people connect it to other systems change, too.

That is why periodic reviews are not enough for AI security. A quarterly check can tell you what looked safe at one point. It cannot tell you what the system started doing last Tuesday after a new integration, a prompt change, or a vendor update.

What Continuous Monitoring Means in AI Security Contexts

AI security continuous monitoring is the routine collection and review of signals from AI systems while they are actually running. It is not just uptime monitoring with a new label. The useful version examines model behavior, access patterns, data movement, changes in dependencies, prompts, outputs, and unusual decisions.

A normal application can contain security bugs that remain unpatched until someone exploits them. AI systems are messier. A model can drift when the input data changes. A retrieval pipeline can start pulling from the wrong source. A prompt template can expose internal context after a small product change. Testing before release helps, but it only covers the conditions you thought to test.

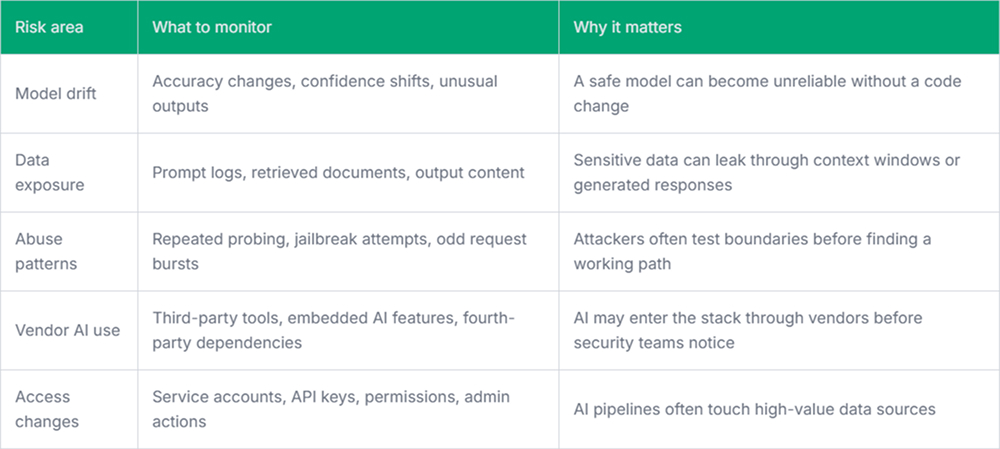

Key AI-Related Risks That Require Ongoing Monitoring

Some AI security risks are hard to spot during design review because they depend on runtime behavior. You only see them after real users, real data, and real integrations are involved.

The vendor side is easy to underestimate. A team may not buy an “AI product” directly, but an existing SaaS tool can add AI features, send data to another processor, or introduce a dependency that was not part of the original review. This is where a tool like Pluto Security can be most effective, providing teams with real-time visibility into AI workspace activity, risk context, and guardrails for AI-driven workflows.

Signals Worth Watching

Not every metric deserves an alert. Too many alerts create noise, and engineers learn to ignore them. Start with signals that point to security or reliability changes, then tune from real incidents and false positives.

- Prompt injection attempts: Track repeated instructions that try to override system behavior, reveal hidden prompts, or bypass tool restrictions.

- Sensitive output rate: Watch for generated responses that include secrets, personal data, internal URLs, or policy-restricted content.

- Retrieval mismatch rate: Measure cases where the AI pulls documents from unexpected sources or returns answers that do not match the retrieved context.

- Model behavior drift: Compare current outputs against known evaluation sets, production feedback, and business-critical scenarios.

- Unusual tool usage: Flag unexpected calls to internal APIs, high-volume lookups, or actions outside normal user patterns.

These are not perfect measures. They are early-warning signals. A spike in prompt injection attempts does not always mean a breach. A sudden drop in answer quality does not always mean compromise. But both deserve a look before they become a customer-facing incident.

Where Monitoring Fits in the Engineering Workflow

AI security monitoring works best when it is treated as part of release and operations, not as a separate governance task. For example, when a team updates a retrieval pipeline, they should be able to compare document access patterns before and after the release. When a model version changes, the deployment should include baseline checks for refusal behavior, sensitive output handling, and latency under expected load.

For user-facing AI features, engineers also need a clean path from alert to fix. If monitoring detects that a support assistant is exposing internal ticket metadata, the team should know where to look first: the prompt template, the retrieval filter, the permission check, the logging layer, or the model routing code. Without that path, AI security monitoring only proves that something is wrong.

Final Thoughts

Continuous monitoring is critical because AI risk keeps changing after deployment. The most significant failures often come from drift, new dependencies, data exposure, and behavior nobody saw in testing.

A practical monitoring setup does not need to be huge on day one. It needs useful signals, clear ownership, and enough evidence for engineers to debug the problem before users or attackers find it first.