Claude Cowork is Anthropic’s autonomous desktop agent. Unlike a chatbot that responds to prompts, Cowork takes a goal, then independently reads files, writes code, browses the web, and executes tasks on your machine to deliver a finished result. For knowledge workers, it’s a productivity leap. For security teams, it’s a fundamentally different threat surface than anything you’ve governed before.

We recently conducted an in-depth technical analysis of Cowork’s architecture – examining its VM sandbox, network stack, browser integration, and logging mechanisms from the inside. This guide distills what we learned into practical security guidance for teams evaluating or deploying Cowork in their organization.

This isn’t a theoretical framework. Every recommendation here is grounded in either official Anthropic documentation or our own firsthand research findings.

Why Cowork Is Not Just Another AI Tool

Before diving into controls and configurations, it’s worth understanding what makes Cowork genuinely different from a security perspective. This isn’t about hype – it’s about threat modeling accurately.

It executes, not just generates. Traditional AI assistants produce text. Cowork produces outcomes. It runs code in a local VM, manipulates files on your system, and interacts with applications on your behalf. The security implications shift from “what data did the user paste into a chatbot” to “what actions is an autonomous agent performing on our infrastructure.”

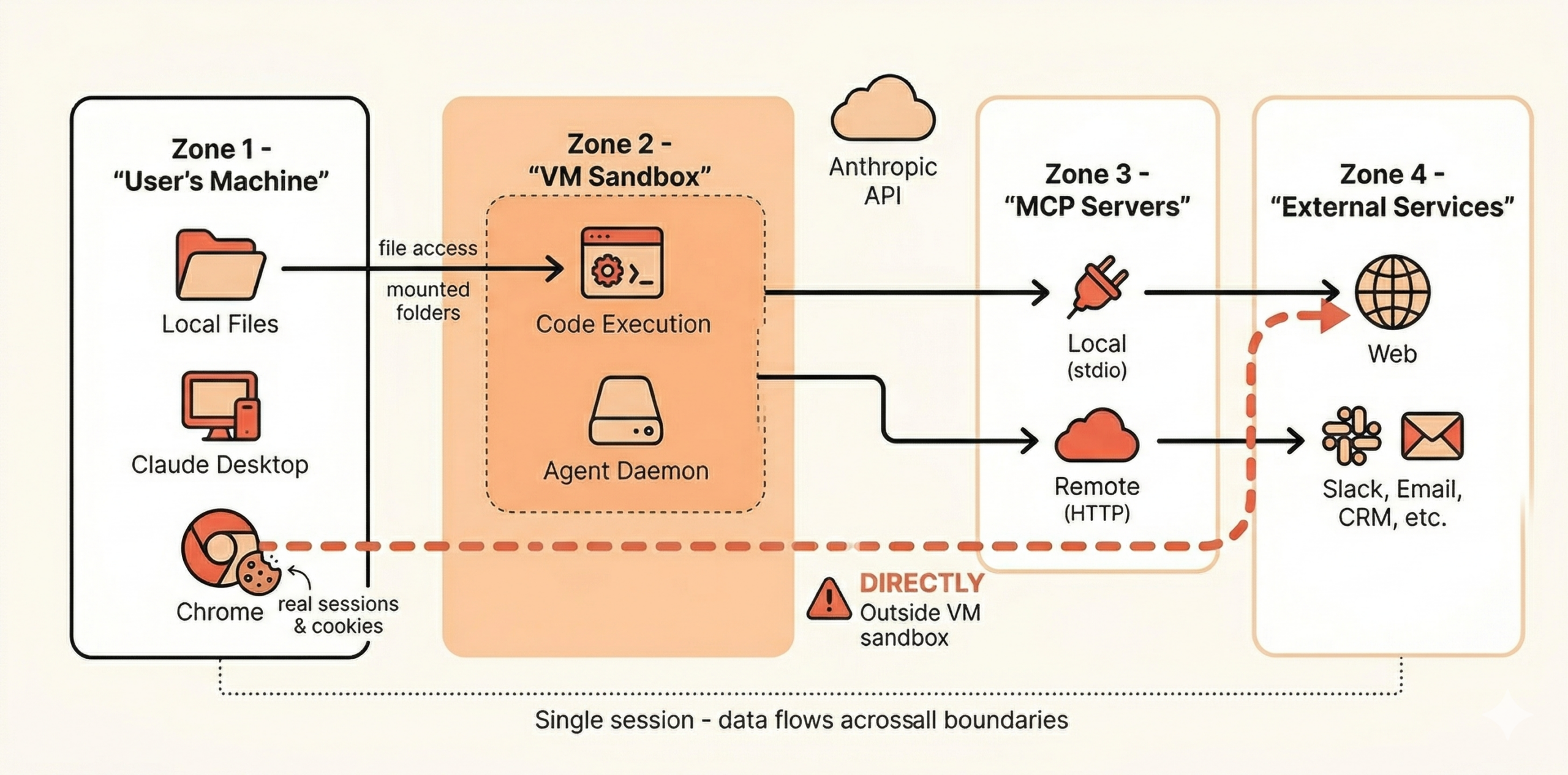

It bridges multiple trust boundaries in a single session. In a typical Cowork session, data can flow from a local spreadsheet, through a browser session authenticated with the user’s cookies, into an MCP-connected service, and back – all without per-step approval. Each of those transitions crosses a trust boundary that your existing controls likely treat as separate.

It operates with the user’s full context. Cowork’s large context window means it holds substantial amounts of information within a single session. If sensitive data enters the session – intentionally or not – it remains accessible for every subsequent action the agent takes. There’s no per-action memory isolation.

It can run unattended. Scheduled tasks execute while the desktop app is open, but the user may not be actively watching. An agent operating without real-time human oversight is a qualitatively different risk from one that requires a human to press “send” for each interaction.

What We Found Under the Hood

Our research into Cowork’s internals revealed several architectural decisions that directly inform how you should think about securing it. We’ll keep this focused on what matters for practitioners – for the full technical deep-dive, see our detailed analysis.

The VM Sandbox: Strong Boundary, Permissive Interior

Cowork executes code inside an isolated virtual machine running Ubuntu 22.04. This is genuinely good security design – the VM itself is the trust boundary, and it effectively contains code execution from reaching the host system.

However, inside that VM, the security posture is deliberately permissive. The agent daemon runs as root, and conventional hardening measures (like restrictive firewall rules) are relaxed within the VM. This is an intentional architectural choice – Anthropic treats the VM boundary as the security perimeter rather than relying on defense-in-depth within it.

What this means for you: The sandbox is solid for its intended purpose – preventing Cowork from escaping to your host system. But don’t assume that anything happening inside the VM has granular security controls. Files shared with Cowork via mounted folders are fully accessible to the agent within that environment.

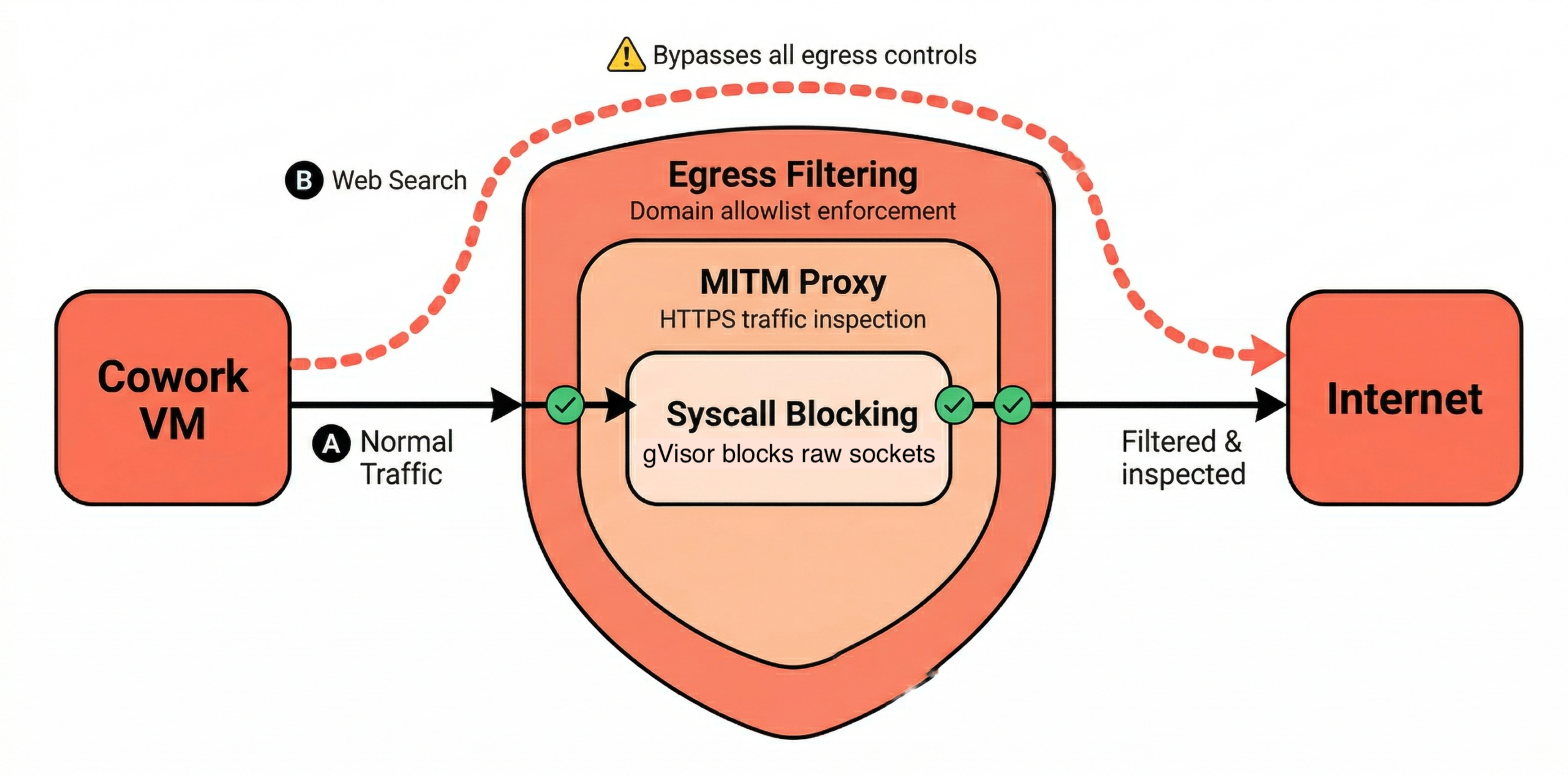

Network Controls: Layered but With Gaps

Cowork’s network security uses a multi-layered approach: syscall-level blocking, a MITM proxy for traffic inspection, and host-side egress filtering. On Enterprise and Team plans, outbound traffic is restricted to a small set of hardcoded domains (api.anthropic.com, pypi.org, github.com, npmjs.org, and a few others), with admins controlling the allowlist.

This is well-designed, with one important caveat: web search bypasses network egress controls entirely. This is documented by Anthropic, but easy to miss. If your security model depends on controlling what data leaves the Cowork environment via network restrictions, web search creates a gap. Admins can disable web search in Organization settings, and we recommend doing so unless it’s explicitly required for approved workflows.

Browser Integration: Outside the Sandbox

This is the finding from our research that has the most direct security implications. Claude’s Chrome integration – the feature that lets Cowork interact with web pages using the user’s browser – operates outside the VM sandbox. It runs through actual Chrome with real cookies and active sessions.

This is an architectural choice that makes sense from a functionality perspective. Users want the agent to interact with their authenticated web applications. But it means that when Cowork browses the web, it’s not contained by the same VM boundary that protects code execution. It’s operating in the user’s actual browser context, with access to their real sessions.

What this means for you: Browser automation is arguably Cowork’s highest-risk capability. Any web page Claude visits can attempt prompt injection, and successful injection occurs in a context that has access to the user’s authenticated sessions. We’ll cover specific mitigation steps below.

Logging: More Persistent Than Expected

Our research found that Cowork generates detailed audit logs (audit.jsonl) containing complete tool interaction transcripts, including bash commands, file operations, and browser actions. Two things stood out:

- Logs persist even after session deletion. If a user deletes a Cowork session through the UI, child session audit logs and the main activity log (

main.log) survive on disk. This is relevant both for incident response (useful) and for data retention compliance (potentially problematic). - Log files have broad read permissions. The log files are world-readable (644 permissions), meaning any process or user on the machine can access them. These logs can contain sensitive content from the session.

What this means for you: For incident response, these local logs are a valuable forensic resource that many teams may not know exists. For data governance, the persistence and permissiveness of these files means your endpoint security posture matters – full-disk encryption is a baseline requirement for any machine running Cowork.

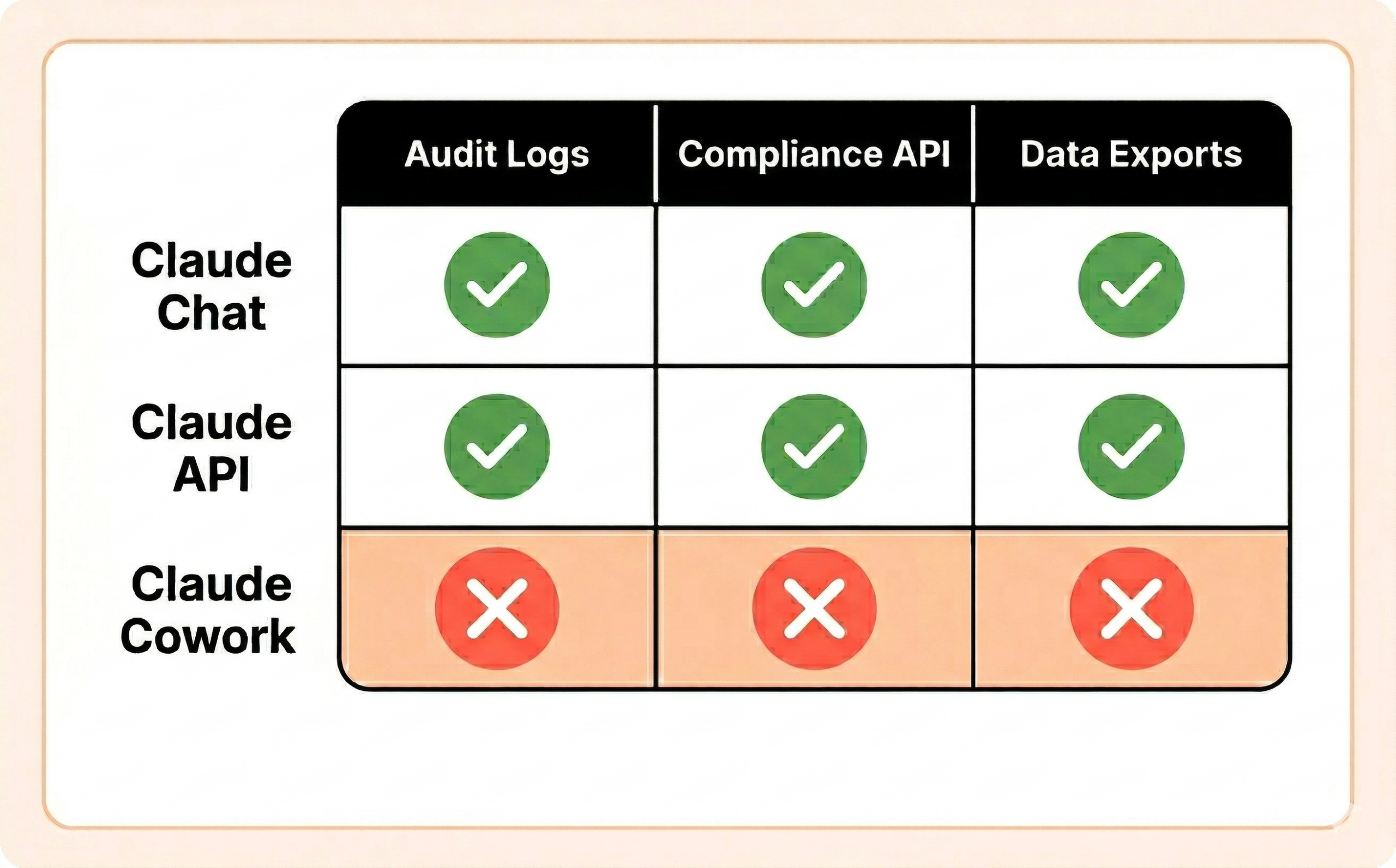

The Audit Visibility Gap: The Elephant in the Room

This deserves its own section because it’s the single most consequential limitation for enterprise deployments.

Cowork activity is not captured in Anthropic’s Audit Logs, Compliance API, or Data Exports. This is explicitly stated in the official documentation and applies across all plan tiers – Enterprise included.

Let that sink in. Your organization may have invested heavily in Anthropic’s enterprise compliance tooling for Claude Chat and API usage. None of that coverage extends to Cowork. There is currently no way to close this gap through configuration alone.

This doesn’t mean you’re flying completely blind. The local audit logs we described above provide forensic data, and there are monitoring approaches that can give you partial visibility. But if your organization requires a compliance-grade audit trail for regulatory purposes (SOX, HIPAA, PCI-DSS, SOC 2), Cowork should not be used for those workloads until Anthropic closes this gap.

For non-regulated workloads, we recommend building compensating controls:

- Endpoint-level monitoring. Since Cowork stores conversation history and logs locally, your EDR and endpoint monitoring become your primary visibility layer. Ensure you have coverage on every machine running Cowork.

- Network-level observation. Even though you can’t see what Cowork is doing inside the session, you can monitor network traffic patterns from machines where it’s active. Unusual egress patterns, unexpected domains, or high-volume data transfers are worth alerting on.

- Periodic local log review. For high-sensitivity deployments, consider a process for periodically collecting and reviewing the local

audit.jsonlfiles. They contain rich session data that can inform both security and compliance reviews. - OpenTelemetry. Anthropic supports streaming Cowork events to an OTel-compatible endpoint, which can be routed to your SIEM. This gives you usage metrics, tool call activity, and cost data. It’s worth setting up as a visibility layer – but be clear-eyed about what it is and isn’t. OTel requires you to stand up and maintain your own collection infrastructure, user prompt content is not included by default, and it does not constitute a compliance-grade audit trail. It’s a useful signal for detecting anomalies and understanding usage patterns, not a substitute for the audit log coverage that’s currently missing.

Practical Hardening: What to Actually Do

With the architectural context covered, here’s what we recommend. We’ve organized this by priority – start at the top and work down.

1. Decide Whether to Enable Cowork at All (And Know Your Defaults)

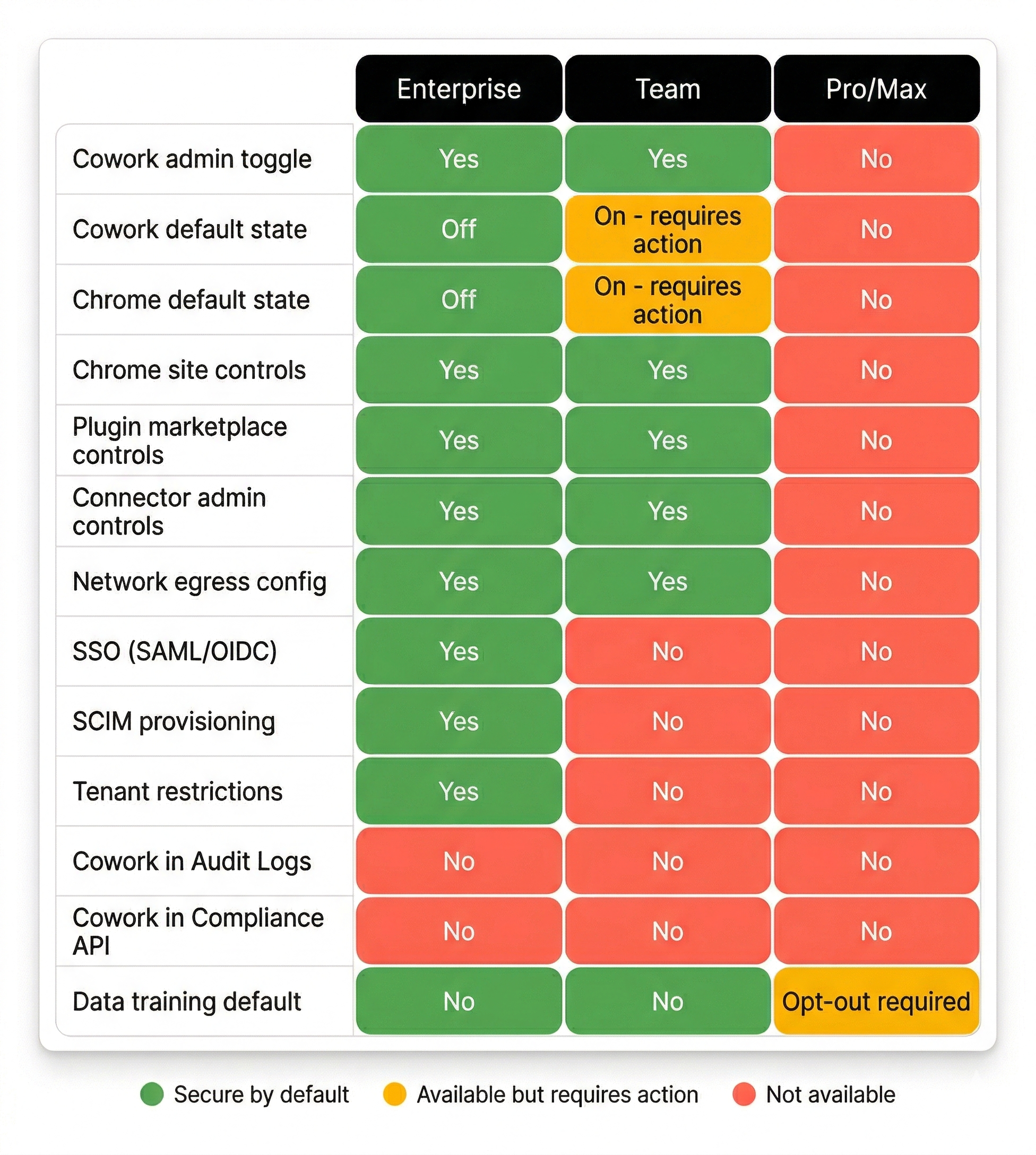

This sounds obvious, but the defaults vary by plan tier in ways that matter:

- Enterprise: Cowork is enabled by default during the research preview, but Chrome integration is disabled by default. Admins can use groups and custom roles for per-team control over Cowork access.

- Team: Both Cowork and Chrome integration are enabled by default. No granular per-team controls – it’s all-or-nothing.

- Pro/Max: Always on. No admin toggles available.

On both Enterprise and Team plans, if you haven’t explicitly reviewed these settings, your users may already have access. If you’re not ready to deploy Cowork, explicitly disable it in Admin Settings > Capabilities. Don’t assume “we haven’t rolled it out” means “nobody is using it.”

2. Control Browser Automation First

Based on our analysis, browser automation is the highest-risk capability. It combines the largest prompt injection attack surface (the open web) with the most sensitive context (the user’s authenticated browser sessions), and operates outside the VM sandbox.

Our recommendation: disable Chrome integration unless you have a specific, justified use case. If you do need it:

- Build a strict allowlist of the specific domains your workflows require. Start narrow – you can always expand.

- Anthropic blocks financial services, banking, crypto, and adult content by default. But the default blocklist does not include: healthcare portals, cloud consoles (AWS, GCP, Azure), password managers, HR/payroll systems, or internal admin tools. Add these to your blocklist explicitly.

- On Enterprise plans, you can disable the Chrome-to-Cowork bridge specifically via Admin Settings > Connectors > Claude in Chrome.

3. Lock Down MCP and Plugins

MCP servers are the integration layer that gives Cowork access to external services. Each server or plugin you add expands the agent’s capability surface. Real-world supply chain attacks against this layer have already been demonstrated – CVE-2025-59536 (CVSS 8.7) allowed remote code execution through malicious hooks in project settings files that bypassed the trust dialog when opening a cloned repository, and CVE-2026-21852 (CVSS 5.3) enabled API key exfiltration by overriding the API base URL in project settings to redirect traffic to an attacker-controlled server. Our own research team at Pluto has also identified and disclosed multiple high and critical severity CVEs in popular MCP servers. These include CVE-2026-27825, an arbitrary file write vulnerability in a Confluence MCP server where an unconstrained download path could be exploited to achieve arbitrary code execution, and CVE-2026-33032, where an unauthenticated MCP endpoint allowed remote Nginx takeover. The MCP ecosystem is young, and supply chain risks are real and actively exploited.

Admin-level controls:

- Maintain a centrally managed allowlist of approved MCP servers. On Enterprise plans, deploy this via

managed-mcp.jsonthrough your MDM solution so users can’t override it. - Plugin distribution controls are available on Team and Enterprise plans. For each plugin, you can set it as required, available for self-install, installed by default, or hidden. Use this to prevent users from installing unvetted plugins from the marketplace.

- Prefer read-only integrations wherever possible. A connector that can read Slack is meaningfully less risky than one that can post to Slack.

When evaluating individual MCP servers, we recommend applying the same rules we use in our Claude Code hardening bundle:

- Prefer local (

stdio) servers over remote (url) servers. A local server is a process you control on the machine. A remote server is a third-party endpoint you’re trusting not to return malicious content – including prompt injection payloads embedded in tool results. - Scope filesystem servers tightly. Never grant access to

/or the user’s home directory. Limit to the specific project directory the task requires. - Audit each server’s tool list before connecting. A server that exposes a

run_shell_commandtool gives Claude – and any injected prompt – shell access. Understand exactly what capabilities you’re granting. - Treat all MCP tool results as untrusted data. A crafted GitHub issue, a poisoned database record, or a malicious Slack message could contain text that Claude interprets as instructions. This is a real and demonstrated prompt injection vector.

- Remove servers you’re not actively using. Every connected server is attack surface, even if nobody is actively querying it.

- Be especially cautious with servers that have write access to production databases, servers that accept arbitrary shell or eval commands, remote servers from sources you don’t fully control, and servers that proxy requests to other LLMs.

4. Scope File Access Tightly

Cowork can only access files in folders you explicitly share with it. This is a good default – but it only works if users exercise discipline.

- Establish a policy that users should create a dedicated working folder for Cowork tasks. Never point it at home directories, Desktop, Downloads, or folders synced with sensitive cloud storage.

- Remember that any file in a shared folder is fully accessible within the VM. If a folder contains a mix of sensitive and non-sensitive files, the agent can see all of it.

5. Govern Scheduled Tasks

Scheduled tasks are where autonomy risk compounds. A task running on a schedule operates without real-time human oversight, and if that task involves browser automation or external integrations, a prompt injection could potentially persist across multiple executions.

- For initial deployments, restrict scheduled tasks to read-only operations – summaries, reports, data extraction. Tasks that write data, send messages, or interact with external services should require explicit justification.

- There are currently no built-in approval workflows or frequency limits for scheduled tasks. This is a policy gap you’ll need to fill with user training and periodic audits.

- Regularly review active scheduled tasks across your organization to catch scope creep or tasks that are no longer needed.

6. Use Global Instructions as a Defensive Layer

Cowork supports global instructions that apply to all sessions via Settings > Cowork > Global Instructions. These aren’t a security boundary – they’re system-level prompts, and like all prompt-based controls, they can potentially be overridden through injection. But they do raise the bar, and they establish organizational norms.

We maintain a battle-tested set of defensive instructions for Claude Code, and the key principles translate directly to Cowork. Here’s a starting point you can copy into your global instructions:

These are not foolproof – no prompt-based defense is. Treat them as one layer in a defense-in-depth approach, not as a primary control. But in our experience hardening Claude Code deployments, well-crafted instructions meaningfully reduce the success rate of casual prompt injection attempts.

7. Address the Account Switching Problem

This is a major gap that’s easy to overlook. Without tenant restrictions, a user on your corporate network can simply switch to a personal Claude account where Cowork, Chrome, and all plugins are fully enabled with zero admin oversight – effectively bypassing every organizational control you’ve configured. Every toggle you’ve carefully set, every blocklist you’ve curated, every plugin you’ve restricted – none of it applies when someone logs into their personal account on the same machine.

On Enterprise plans, Anthropic supports tenant restrictions to close this gap. Here’s how it works: your network proxy injects an anthropic-allowed-org-ids HTTP header into all HTTPS requests to claude.ai, claude.com, api.anthropic.com, and anthropic.com. When this header is present, Claude will only allow authentication with accounts belonging to your organization – attempts to switch to a personal account are blocked with a tenant_restriction_violation error.

To set this up:

- Find your Organization UUID in Settings > Account (or Admin Settings > Organization, scroll to bottom)

- Configure your TLS-inspecting proxy to inject the header:

anthropic-allowed-org-ids: <your-org-uuid> - This works across the web interface, the desktop app, and API authentication

- Supported on common enterprise proxies including Zscaler ZIA, Palo Alto Prisma Access, Cato Networks, and Netskope

The prerequisite is a proxy capable of TLS inspection – which most enterprises already have deployed. If you have the infrastructure, this is straightforward to implement and we consider it a must-have for any Enterprise Cowork deployment.

On Team and Pro/Max plans, tenant restrictions are not available. This is a significant control gap. Compensating options include blocking claude.ai at the proxy level entirely (heavy-handed but effective), using Chrome enterprise policies via GPO or MDM to prevent the Claude browser extension from being installed on managed browsers, or relying on endpoint-level monitoring to detect personal account usage.

Prompt Injection: A Managed Risk, Not a Solved One

Prompt injection – where malicious instructions hidden in documents, web pages, or external data sources hijack the agent’s behavior – is the most discussed risk in agentic AI, and for good reason.

Our research confirmed that Anthropic has invested significant effort into hardening prompt injection defenses. The architecture includes multiple layers: reinforcement learning training for instruction recognition, content classifiers that scan untrusted inputs, and action review systems that evaluate proposed actions before execution. These are real, substantive mitigations.

However, we strongly recommend that organizations do not treat these defenses as a security boundary. Prompt injection is a fundamentally unsolved problem in large language models. Defenses can and do reduce the success rate significantly, but no current approach can guarantee 100% prevention. Your security posture should assume that prompt injection will occasionally succeed and focus on limiting what a successful injection can accomplish.

This is exactly why the controls above matter. Tight file scoping limits what data an injection can access. Disabled browser automation removes the most dangerous execution context. Restricted MCP servers limit what actions are available. Read-only connectors ensure that even a successful injection can’t write to external systems. Each control reduces the blast radius of the attacks that get through.

Enterprise vs. Team vs. Pro/Max: Know What You Can Actually Control

Your Anthropic plan tier fundamentally determines what security controls are available to you. This table covers the most security-relevant differences:

The bottom line: Cowork is on by default across all plans – the difference is what you can do about it. Enterprise gives you the most controls (Chrome off by default, groups/custom roles, SSO, tenant restrictions). Team gives you basic admin controls but permissive defaults that require immediate attention. Pro/Max users have essentially no organizational security controls available.

If you’re evaluating Cowork for any deployment where security governance matters, Enterprise is the only tier that provides a reasonable starting point.

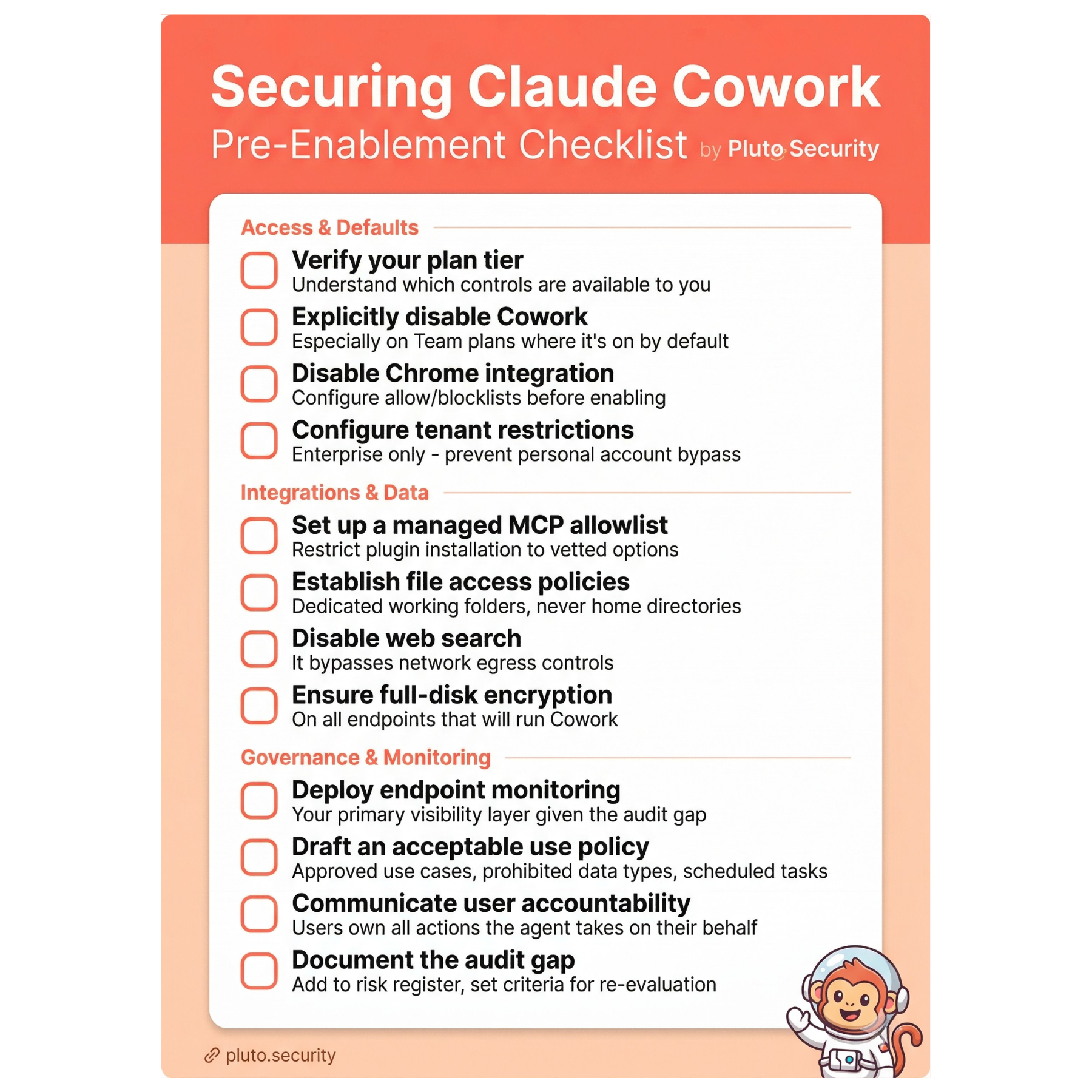

Before You Enable: A Quick Checklist

Claude Cowork represents a real step forward in what AI agents can do for knowledge workers. The security architecture shows genuine thought – the VM sandbox, network controls, and prompt injection defenses are substantive, not theatrical. But it’s also a fundamentally new kind of tool that operates across trust boundaries in ways that existing security frameworks weren’t built to handle.

The good news is that the most impactful security measures are also the most straightforward: disable what you don’t need, scope access tightly, assume prompt injection defenses will occasionally fail, and build your controls around limiting blast radius. You don’t need to solve every theoretical risk before deploying Cowork – you need to understand the architecture well enough to make informed decisions about which risks you’re accepting.

We’ll continue to update this guide as Cowork evolves and as Anthropic addresses current gaps – particularly around audit visibility. If you have questions about specific deployment scenarios or want to discuss findings from our research in more detail, reach out to us at contact@pluto.security.

This guide is based on our independent security research into Claude Cowork’s architecture, combined with official Anthropic documentation. Cowork is currently in research preview, and capabilities and controls may change. All official documentation references are current as of April 2026.

Official documentation referenced:

- Use Claude Cowork on Team and Enterprise Plans

- Use Claude Cowork Safely

- Get Started with Claude Cowork

- Let Claude Use Your Computer in Cowork

- Claude Cowork Product Page

Our research and tools:

- Inside Claude Cowork: How Anthropic’s Autonomous Agent Actually Works

- Claude Code Secure Practices – Hardening Bundle – many of the same principles apply to Cowork deployments