Supply Chain Risks of Agent Skills

AI agents are rapidly evolving from simple automation tools into complex systems capable of performing a wide range of functions. These capabilities include executing tasks, interacting with external services, and integrating with other third-party capabilities. Many of these capabilities are delivered through agent skills, which are modular instruction packages that extend the functionality of AI agents. While this modular architecture accelerates innovation, it also introduces a new class of supply chain risks. This article examines the supply chain risks associated with agent skills and the controls security teams should implement to mitigate them.

The Skill Files Are the New Supply Chain Risk

Agent skills are organized folders of instructions, scripts, and resources that agents can discover and load dynamically to perform specific tasks more effectively. Technically, a skill consists of a skill.md file with YAML frontmatter. It defines metadata such as compatibility, permissions, and allowed tools, and provides markdown-encoded instructions that the agent reads and executes when the skill is activated. The frontmatter explicitly declares which tool categories the skill can invoke, such as read, exec, or write. They typically include all instruction files, configuration settings, prompt templates, and references to external resources within the agentic AI environment. These files instruct an AI agent how to perform a set of specialized tasks, such as:

- Data retrieval

- Code generation

- API interaction

- Workflow automation

- Infrastructure management

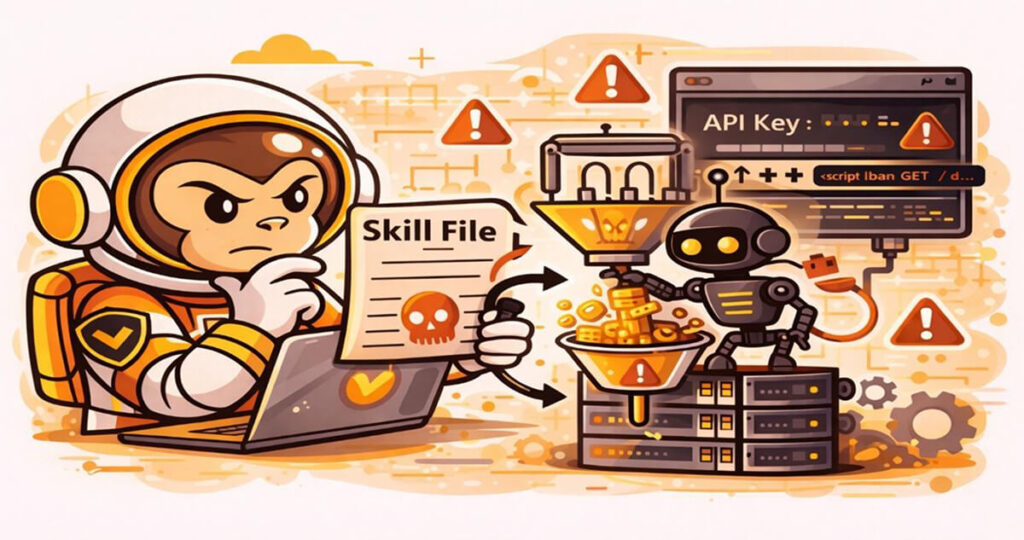

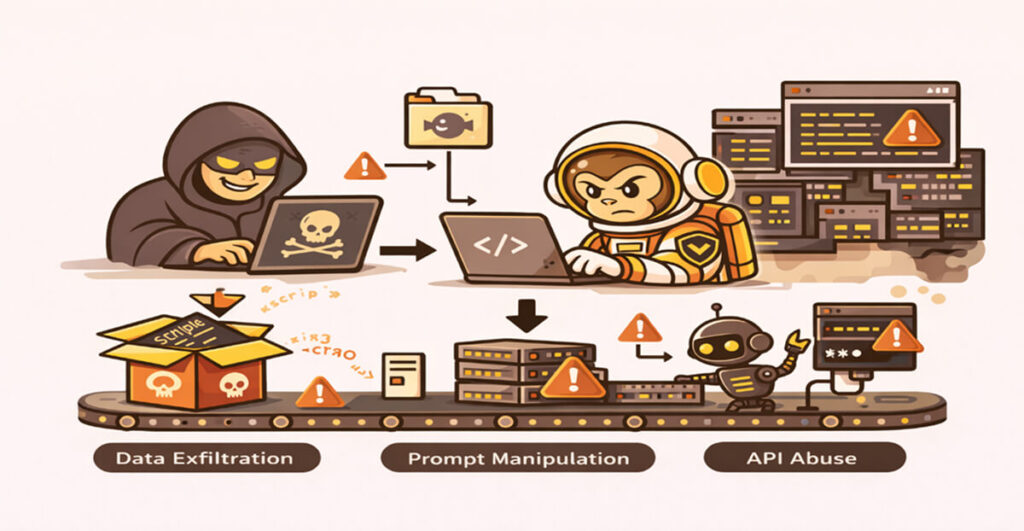

Skills can be downloaded and enabled dynamically, thereby introducing a new layer of AI agent security risks. Unlike the traditional software packages, skill files often operate at the behavioral level. As a result, they can influence how an AI agent interprets instructions, interacts with external systems, and processes sensitive information. If a malicious actor modifies or distributes a compromised skill file, the consequences for an organization can include:

- Data exfiltration

- Unauthorized API usage

- Prompt manipulation

- Execution of unsafe actions

- Propagation of malicious instructions across workflows

For security leaders, the risks posed by skills make them analogous to unverified code dependencies. Therefore, organizations should address the risks associated with skills to prevent them from introducing significant supply chain vulnerabilities into AI-driven development environments.

Agent Skills: Real-World Attack Scenarios

The following are some of the widely reported real-world attack scenarios involving agent skills.

- Malicious Skill Supply Chain Attack (OpenClaw/ClawHub, 2026): Antiy CERT confirmed 1,184 malicious skills across ClawHub. ClawHub serves as the package registry for the OpenClaw AI agent framework. Many OpenClaw instances were exposed to the public internet with insecure default configurations, some of which contained exploit code. The attacks mimicked typosquatting and automated mass uploads to the AI agent infrastructure. Even after a takedown is successfully carried out, malicious actors can persist and propagate across downstream registries and aggregators3. This effectively extends the attack surface beyond the original marketplace.

- Indirect Prompt Injection via Skill Files (Cursor/Rules Files, 2025): Attackers inserted malicious prompts into crowdsourced rules files in Cursor. These appeared to contain only an innocuous instruction with malicious code hidden from the user’s view. This led to nefarious commands to be executed across the system.

The Trust Problem: Download Counts Don’t Ensure Safety

In many emerging AI ecosystems, especially in tech startup environments, agent skills are distributed through public marketplaces. They operate similarly to open-source package repositories, where platforms often rely on popularity metrics such as download counts or user ratings to signal trust. However, such indicators are not always reliable measures of security. Attackers can manipulate the claimed download metrics through automated installations and coordinated bot activity. They can also engage in deceptive marketing strategies that encourage the widespread adoption of skills that may contain hidden, malicious logic.

This phenomenon introduces subtle AI agent security challenges, including a false perception of trust. In such cases, perceived trust is often based on social signals rather than technical verification. Security leaders and their teams should therefore treat skill marketplace metrics with extreme caution. They should not rely solely on popularity indicators; they should also independently verify each skill. The verification process should examine skill content, permissions, and behavior, and be carried out before enabling skills in production environments.

What’s Actually in a Skill’s Instruction Surface

To ensure proper assessment of agentic AI security, security teams must understand the internal structure of each skill. Most skill packages contain several key components that influence agent behavior, including the following:

- Instruction prompts: These define how the agent interprets tasks and user input. It is important to note that malicious prompts can redirect agent behavior toward unintended outcomes, increasing exposure to security risks.

- Configuration files: A skill should also be properly configured. Its configuration settings should specify several secure aspects, including external endpoints, authentication mechanisms, and execution parameters.

- API integrations: Many skills often interact with external services through Application Programming Interfaces (APIs). Therefore, improperly secured integrations can expose important credentials or sensitive data.

- Execution permissions: Some skills require elevated permissions to perform defined automated tasks within a given system. Each of these components represents a potential AI agent security risk if they are not carefully reviewed.

- Decision-making process: Some skills involve specific logic that influences how the agent makes operational decisions. These instructions can alter the agent’s reasoning patterns, and malicious actors may sometimes use them to subtly manipulate system behavior.

How to Evaluate Third-Party AI Skills Before Enabling Them

Organizations deploying AI agents should implement a set of structured security evaluation processes before enabling third-party skills in their operations. The following are some of the best practices that can be implemented to mitigate supply chain risks associated with external skills:

- Perform static reviews: Security teams should regularly review all skill files across the organization. They should ensure they examine all instruction files, configuration scripts, and embedded prompts before deployment within the organization.

- Analyze external dependencies: Some skills may reference external APIs, repositories, or services. Security teams should treat skills as any other third-party code and evaluate their reliability and security. This prevents lateral transfer and propagation of security threats.

- Implement the principle of least privilege (PoLP): This principle ensures AI agents operate with the minimum permissions necessary to perform their intended tasks. This practice should be treated as a baseline requirement for AI agentic security.

- Use sandboxed environments: Test new skills in isolated environments. This allows organizations to observe agent behavior before production deployment, thereby reducing risk.

- Monitor runtime behavior: Even after deployment, organizations should use sophisticated tools to continuously monitor AI agent activity to detect abnormal actions. This can significantly reduce the impact of AI agent security risks associated with third-party skills.

Keeping the Advantage on the Defensive Side

As AI agents become increasingly capable and autonomous, attackers are also targeting the ecosystems that support them. The continued rise of skill marketplaces and modular AI architectures means that agentic AI security must evolve alongside these innovations. Several defensive strategies can be implemented to assist in securing agent ecosystems, including the following:

- Skill provenance verification: Organizations can deploy cryptographic verification mechanisms to confirm the authenticity of skill packages. This will ensure that compromised skills packages do not contaminate an organization’s operational environment.

- Marketplace security standards: Platform providers can begin implementing measures to enforce stronger vetting of published skills. This has continued to help organizations keep supply chain risk at manageable levels.

- Behavioral monitoring: It is crucial for security systems to continuously monitor AI agent behavior. This is key to detecting abnormal decision patterns across skills, allowing security teams to apply effective solutions.

- AI-driven security controls: Ironically, in some cases, AI itself may become a critical tool for defending against malicious AI skills. Organizations can deploy sophisticated AI tools to assist by analyzing behavioral anomalies at scale. This increases efficiency in undertaking security tasks.

Ultimately, the bottom line is that security teams should always treat agent skills as potential supply chain components rather than simple configuration files. This is key as the exact security discipline originally applied to software dependencies will now be extended to AI agent ecosystems.

Frequently Asked Questions (FAQs)

What makes agent skill files a supply chain risk?

Agent skill files function as modular instruction packages that influence how AI agents behave and interact with systems. If a malicious actor distributes a compromised skill, it can manipulate agent actions, access sensitive data, or execute unauthorized workflows, introducing significant supply chain risks within AI ecosystems.

Can a malicious skill file compromise an AI agent silently?

Yes. Because skill files often modify an agent’s internal instructions or configuration parameters, a malicious skill can alter behavior without obvious indicators. The agent may continue functioning normally while performing unauthorized actions such as data exfiltration or executing hidden instructions.

How do attackers manipulate download counts in skill marketplaces?

Attackers can manipulate download counts in the skill marketplace by artificially inflating them through automated installations as their primary method. They can also use coordinated bot networks and deceptive promotional tactics for the same purpose. These techniques create the appearance of popularity or trustworthiness, which encourages other users to install the compromised skills, thereby increasing the risk.

What files should security teams review before enabling a skill?

Before enabling a skill, security teams should review a variety of file types, including instruction prompts, configuration files, embedded scripts, and API integration settings. Reviews should also extend to referenced external resources associated with these file types. These file types define how AI agents behave and may contain hidden instructions that can change system operations and expose sensitive data.

How should organizations treat third-party AI skills from a security perspective?

Organizations should always treat third-party AI skills as untrusted supply chain components that can elevate the threat levels. Therefore, before enabling them, security leaders and their teams should perform thorough code and instruction reviews and verify external dependencies. They should also apply the principle of least privilege to all access controls, while monitoring runtime behavior to detect suspicious activity in real time.

Conclusion

Agent skills are emerging and introducing new risks that extend agentic AI security challenges beyond traditional software vulnerabilities. The integrity of the skill files themselves, and the trustworthiness of skill marketplaces, are therefore set to become critical elements of modern AI security architecture. Therefore, security teams should view agent skills as potential vectors for compromise within AI ecosystems and guide their respective organizations in addressing the resultant risks.

Useful References

- AIM Research (2025). 15 threats to the security of AI agents. AIMultiple.

https://aimultiple.com/security-of-ai-agents - Antiy CERT (2026). ClawHavoc campaign analysis: Malicious skills in the OpenClaw ecosystem. Antiy Labs.

https://www.antiy.com - Anthropic (2025, October 16). Equipping agents for the real world with Agent Skills. Anthropic Engineering Blog.

https://www.anthropic.com/engineering/equipping-agents-for-the-real-world-with-agent-skills - Check Point Research (2026, February). Caught in the hook: RCE and API token exfiltration through Claude Code project files (CVE-2025-59536). Check Point Software Technologies.

https://research.checkpoint.com - Perkal, Y., & Melzer, E. (2026). The skills marketplace just inherited its first second-degree supply chain risk. Substack.

https://substack.com/@plutosecurity/note/c-209873629 - Rojas-Carulla, M. (2025, Q4). AI agent attacks in Q4 2025 signal new risks for 2026. eSecurity Planet.

https://www.esecurityplanet.com/artificial-intelligence/ai-agent-attacks-in-q4-2025-signal-new-risks-for-2026/ - SecurityScorecard (2026). OpenClaw ecosystem exposure report: Insecure defaults and public internet exposure. SecurityScorecard.

https://securityscorecard.com - https://substack.com/@plutosecurity/note/c-209873629