AI tools are no longer limited to a single experimental group within a company. Developers use them to write code. Support teams use them to summarize tickets. Sales teams may use them to draft customer responses. Security teams then face a harder question: how do we enable the adoption of useful AI without letting every team make its own rules?

That is where AI tool access control becomes useful. It defines who can use which AI tools, what data they can send, and which actions require review before they occur.

What Policy-Based Security Controls for AI Tools Look Like

Policy-based controls work best when they are tied to real workflows, not broad statements like “use AI responsibly.” A policy needs to be specific enough that it can be enforced by systems, not just remembered by people.

For example, an engineering team may be allowed to use an AI coding assistant inside approved repositories, but blocked from pasting production secrets, customer records, or private incident data into external chat tools.

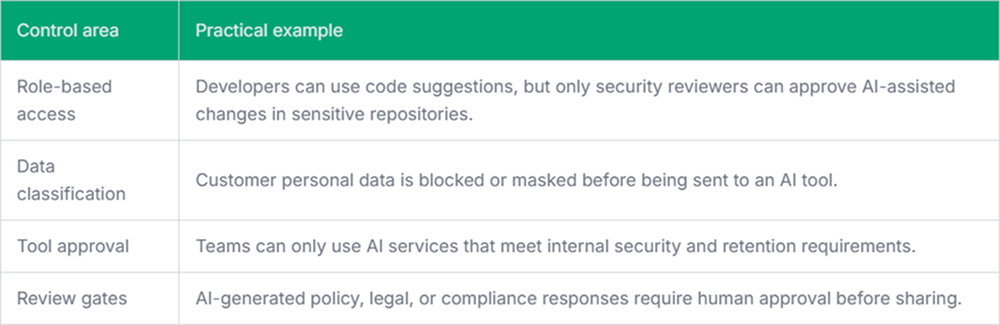

A useful policy usually covers a few areas:

- Approved tools: Which AI tools are allowed for each team or role?

- Data rules: What types of data can be entered, blocked, masked, or logged?

- User scope: Which teams, roles, or groups can access specific capabilities?

- Action limits: Which AI-generated actions need approval before execution?

- Monitoring: What needs to be recorded for audit, investigation, or compliance?

Why Team-Level Policies Matter

The mistake many companies make is treating AI usage as a simple allow-or-block problem. That worked for older software access models. It does not work well for AI tools because the risk often depends on context.

A developer asking an AI assistant to explain a public API is low risk. The same developer pasting an unreleased security patch is not. A finance analyst summarizing a public earnings report is different from uploading payroll data into an unapproved model. The same tool can be safe in one workflow and risky in another.

How AI Usage Policies Are Enforced Across Teams

A written policy is only the first layer. The harder part is enforcing the AI usage policy. Enforcement usually happens through a mix of identity systems, security gateways, browser controls, endpoint agents, data loss prevention tools, and logging pipelines.

For example, a company may use Pluto Security to gain real-time visibility into AI workspaces, identify risky usage, and apply guardrails to AI-driven workflows. If a request contains source code from a restricted repository, the policy can block the prompt or redact parts of it. If the user is from an approved engineering group and the content is safe, the request goes through.

This is where policy-based security becomes more than a compliance document. It becomes part of the access layer.

Policies can also be enforced through:

- SSO and group membership for tool access.

- Prompt and response scanning for secrets, credentials, and regulated data.

- Logging for audits and incident review.

- Approval workflows for high-risk AI output.

- Usage reports showing which teams use which tools.

There is still a human side to this. Security teams need to explain why some actions are blocked. Engineers need clear feedback when a prompt is rejected. Otherwise, people will work around the system, usually with personal accounts or unmanaged tools.

Keeping Policies Maintainable

AI policies get messy when they are treated as one-time documents. New tools appear. Model features change. Teams find new use cases. A policy written six months ago may already miss important workflows.

The maintainable version is smaller and more operational. Start with a few clear rules. Tie them to actual controls. Review exceptions regularly. Remove rules that no longer match how teams work.

Security teams should also watch for patterns. If many users keep requesting access to the same blocked workflow, that may mean the policy is too strict, unclear, or missing a safe approved alternative. Blocking alone does not solve that.

Final Thoughts

AI usage across teams needs more than trust and training. People move fast, and AI tools make it easy to move data where it shouldn’t go.

Policy-based security gives organizations a practical control layer. It helps define access, enforce data rules, and review risky actions without blocking every useful AI workflow. That balance is the real work.